Original article: ホームページがGoogleに登録されたか確認するには?登録方法・対処法も紹介!

Once you’re done with designing a web page, the next step is getting visitors.

Without proper registration, your website may not appear on Google – the World’s number one internet search engine.

So… How exactly do you get your website out there?

Today, we’ll go through how to check if your website has been validated, and how to register your website.

Contents

How do I Register My Website on Search Engines?

Google utilizes a web crawler, which sounds extremely technical, but essentially it is a bot that makes your search inquiries more efficient.

Think of it as a little helper that gives you the most accurate results based on your input.

When new websites are registered, Google’s AI dictates its position in the search results.

The process is similar to how new words are registered to dictionaries.

Although the bot performs this without proper registration, going through the process thoroughly can significantly increase your ranking in the search results.

Let’s go through these steps one by one.

How to Check if Your Website is Registered by Google

First, open Google the Google search engine and type “site:”, followed by your home page URL.

If your website is registered, it should appear as the top result.

In case your website isn’t shown in these results, you can sign up for their Search Console to get alerts and feedback on how your website performs in their search engine rankings.

What to do if your website isn’t on Google

If your website has been posted very recently, there’s a big chance that the web crawler bot has not had enough time to come around to find your specific site yet.

Unfortunately for everyone, Google hasn’t publicly stated how long it may take for your average website to be discovered by their system.

Based on our experience, it could take anywhere from a few days to a few months, even.

We’ll discuss how to optimize your website to shorten this time below.

But first, we recommend that you periodically check using the “site:” method.

- Make sure your website does not contain penalized content

- Implementing the robot.txt file

- Settings for the meta robots and x-robots-tag

- Making sure your website doesn’t have the 500 error

Google filters out malfunctioning or broken websites, so making sure that your website runs properly is one of the best ways to optimize your chances of appearing in the search results.

Penalized content

If websites include penalized content or otherwise general penalties, the indexing for those sites are ignored by the web crawler.

If Google decides that your website contains penalizable content, chances are, your web page will not appear on the search results.

Here’s an extensive list for Google’s guidelines for non-acceptable content. Check if your website has any of these key fundamental flaws such as webspam and malicious content.

For your website to perform well and reach its target audience, edit and remodel your website based on these principals.

You may request for a second review if your content has been marked as inappropriate for breaching the guidelines. However, there is no guarantee.

Please make sure to follow these guidelines carefully so that your website may function as intended.

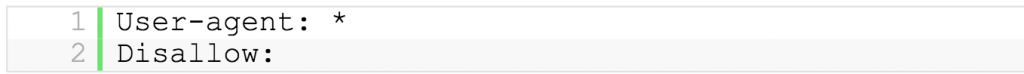

Implementing the robots.txt files

The robots.txt file determines whether or not your site may be utilized by the web crawler bot.

Above, the “/” slash sign next to “Disallow:” means that your site cannot be utilized.

By removing the “/” sign, your site will be allowed to be used by the web crawler bot.

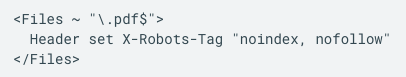

Settings for the meta robots and x-robots-tag

The meta robots files prevent the visibility of your site by search engines. By default, it is set to:

If this exist on your web code, you may delete this section to increase your site’s indexing by web crawlers.

THe x-robots-tag functions similar to the meta robots tag for files where the meta robot tag is unavailable to be implemented, such as PDF files.

You can find this in the .htaccess file. By deleting it, your website may be indexed by crawlers.

Making sure your website doesn’t have the 500 error

The 500 internal server error is a generic error that is shown when there is a problem in the server, however the server could not specify the exact associated problem. As such, sites with these errors are not accessed by the web crawler.

Getting down to the bottom of it can get quite technical, so we suggest consulting an expert.

Here are some of the possible causes:

- Problems with the .httaccess

- Problems with the CGI files settings

- Problems with the CGI file directory permissions

- Server traffic

- Bugs present in WordPress

- Problems inherent in the PC

- Overloaded memory capacity

As such, there are several possible causes. It’s best to go through each case and solve issues one by one to slowly eliminate possible errors..

Submitting a sitemap to Google

By building your webpage’s sitemap and submitting to the Google Search Console, your site becomes more understandable by the engine.

You can find this link in the Google support page to guide you in submitting your sitemap. Typically, these files should be in the XML format and contain no less than 50,000 URLs or below 50 MB.

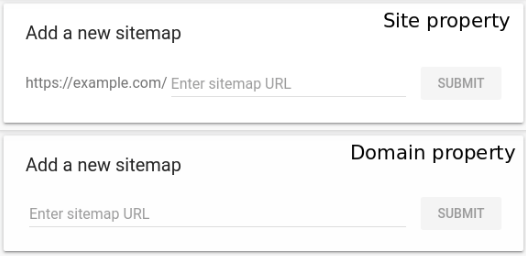

First, submit your verified domain through the Google Search Console.

Next, under the sitemaps tab, upload your sitemap URL

Click submit, and that’s it – your sitemap has been submitted!

Summary

If your website is yet to be verified on Google, it can severely affect your visitation and conversion rates.

This is especially the case if your target audience relies on visitations through other search engines such as Google or Bing.

Other websites have their own version of Google’s Search Console – essentially, they have the same functions to a web crawler.

Let’s stay vigilant and work our way to make our websites more visible.